To provide the reader with a ``feel'' for the use and usefulness of the protocols package, we will begin with a motivating example: a simple Python documentation framework. To avoid getting bogged down in details, we will only sketch a skeleton of the framework, highlighting areas where the protocols package would play a part.

First, let's consider the background and requirements. Python has many documentation tools available, ranging from the built-in pydoc to third-party tools such as HappyDoc, epydoc, and Synopsis. Many of these tools generate documentation from ``live'' Python objects, some using the Python inspect module to do so.

However, such tools often encounter difficulties in the Python 2.2 world. Tools that use type() checks break with custom metaclasses, and even tools that use isinstance() break when dealing with custom descriptor types. These tools often handle other custom object types poorly as well: for example, Zope Interface objects can cause some versions of help() and pydoc to crash!

The state of Python documentation tools is an example of the problem that both PEP 246 and the protocols package were intended to solve: introspection makes frameworks brittle and unextensible. We can't easily plug new kinds of ``documentables'' into our documentation tools, or control how existing objects are documented. These are exactly the kind of problems that component adaptation and open protocols were created to address.

So let's review our requirements for the documentation framework. First, it should work with existing built-in types, without requiring a new version of Python. Second, it should allow users to control how objects are recognized and documented. Third, we want the framework to be flexible enough to create different kinds of documentation, like JavaDoc-style HTML, PDF reference manuals, plaintext online help or manpages, and so on - with whatever kinds of documentable objects exist. If user A creates a new ``documentable object,'' and user B creates a new documentation format, user C should be able to combine the two.

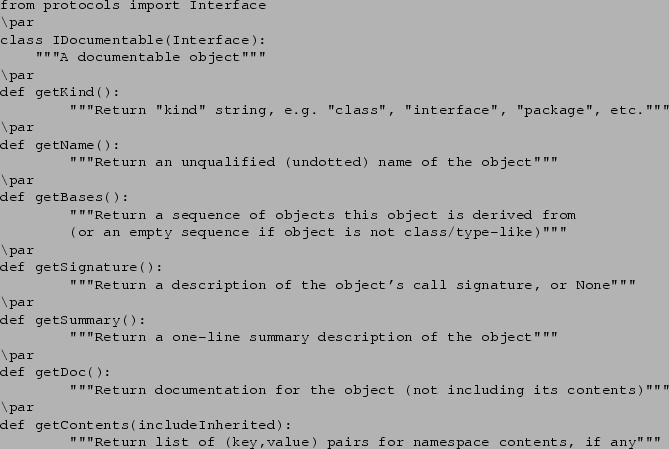

To design a framework with the protocols package, the best place to start is often with an ``ideal'' interface. We pretend that every object is already the kind of object that would do everything we need it to do. In the case of documentation, we want objects to be able to tell us their name, what kind of object they should be listed as, their base classes (if applicable), their call signature (if callable), and their contents (if a namespace).

So let's define our ideal interface, using protocols.Interface. (Note: This is not the only way to define an interface; we could also use an abstract base class, or other techniques. Interface definition is discussed further in section 1.1.4.)

Now, in the ``real world,'' no existing Python objects provide this interface. But, if every Python object did, we'd be in great shape for writing documentation tools. The tools could focus purely on issues of formatting and organization, not ``digging up dirt'' on what to say about the objects.

Notice that we don't need to care whether an object is a type or a module or a staticmethod or something else. If a future version of Python made modules callable or functions able to somehow inherit from other functions, we'd be covered, as long as those new objects supported the methods we described. Even if a tool needs to care about the ``package'' kind vs. the ``module'' kind for formatting or organizational reasons, it can easily be written to assume that new ``kinds'' might still appear. For example, an index generator might just generate separate alphabetical indexes for each ``kind'' it encounters during processing.

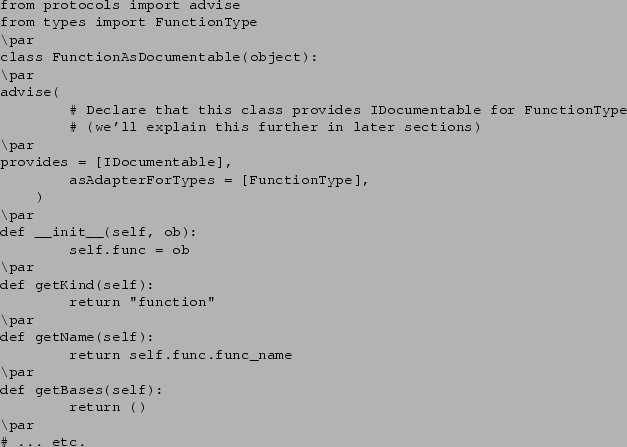

Okay, so we've envisioned our ideal scenario, and documented it as an interface. Now what? Well, we could start writing documentation tools that expect to be given objects that support the IDocumentable interface, but that wouldn't be very useful, since we don't have any IDocumentable objects yet. So let's define some adapters for built-in types, so that we have something for our tools to document.

The FunctionAsDocumentable class wraps a function object with the methods of an IDocumentable, giving us the behavior we need to document it. Now all we need to do is define similar wrappers for all the other built-in types, and for any user-defined types, and then pick the right kind of wrapper to use when given an object to document.

But wait! Isn't this just as complicated as writing a documentation tool the ``old fashioned'' way? We still have to write code to get the data we need, and we still need to figure out what code to use. Where's the benefit?

Enter the PEP 246 adapt() function. adapt() has two

required arguments: an object to adapt, and a protocol that you want

it adapted to. Our documentation tool, when given an object to document, will

simply call adapt(object,IDocumentable) and receive an instance of the

appropriate adapter. (Or, if the object has already declared that it supports

IDocumentable, we'll simply get the same object back from

adapt() that we passed in.)

But that's only the beginning. If we create and distribute a documentation tool based on IDocumentable, then anyone who creates a new kind of documentable object can write their own adapters, and register them via the protocols package, without changing the documentation tool, or needing to give special configuration options to the tool or call tool-specific registration functions. (Which means we don't have to design or code those options or registration functions into our tool.)

Also, lets say I use some kind of ``finite state machine'' library written by vendor A, and I'd like to use it with this new documentation tool from vendor B. I can write and register adapters from such types as ``finite state machine,'' ``state,'' and ``transition'' to IDocumentable. I can then use vendor B's tool to document vendor A's object types.

And it goes further. Suppose vendor C comes out with a new super-duper documentation framework with advanced features. To use the new features, however, a more sophisticated interface than IDocumentable is needed. So vendor C's tool requires objects to support his new ISuperDocumentable interface. What happens then? Is the new package doomed to sit idle because everybody else only has IDocumentable objects?

Heck no. At least, not if vendor C starts by defining an adapter from IDocumentable to ISuperDocumentable that supplies reasonable default behavior for ``older'' objects. Then he or she writes adapters from built-in types to ISuperDocumentable that provide more useful-than-default behaviors where applicable. Now, the super-framework is instantly usable with existing adapters for other object types. And, if vendor C defined ISuperDocumentable as extending IDocumentable, there's another benefit, too.

Suppose vendor A upgrades their finite state machine library to include direct or adapted support for the new ISuperDocumentable interface. Do I need to switch to vendor C's documentation tool now? Must I continue to maintain my FSM-to-IDocumentable adapters? No, to both questions. If ISuperDocumentable is a strict extension of IDocumentable, then I may use an ISuperDocumentable anywhere an IDocumentable is accepted. Thus, I can use vendor A's new library with my existing (non-``super'') documentation tool from vendor B, without needing my old adapters any more.

As you can see, replacing introspection with adaptation makes frameworks more flexible and extensible. It also simplifies the design process, by letting you focus on what you want to do, instead of on the details of which objects are doing it.

As we mentioned at the beginning of this section, this example is only a sketch of a skeleton of a real documentation framework. For example, a real documentation framework would also need to define an ISignature interface for objects returned from getSignature(). We've also glossed over many other issues that the designers of a real documentation framework would face, in order to focus on the problems that can be readily solved with adaptable components.

And that's our point, actually. Every framework has two kinds of design issues: the ones that are specific to the framework's subject area, and the ones that would apply to any framework. The protocols package can save you a lot of work dealing with the latter, so you can spend more time focusing on the former. Let's start looking at how, beginning with the concepts of ``protocols'' and ``interfaces''.